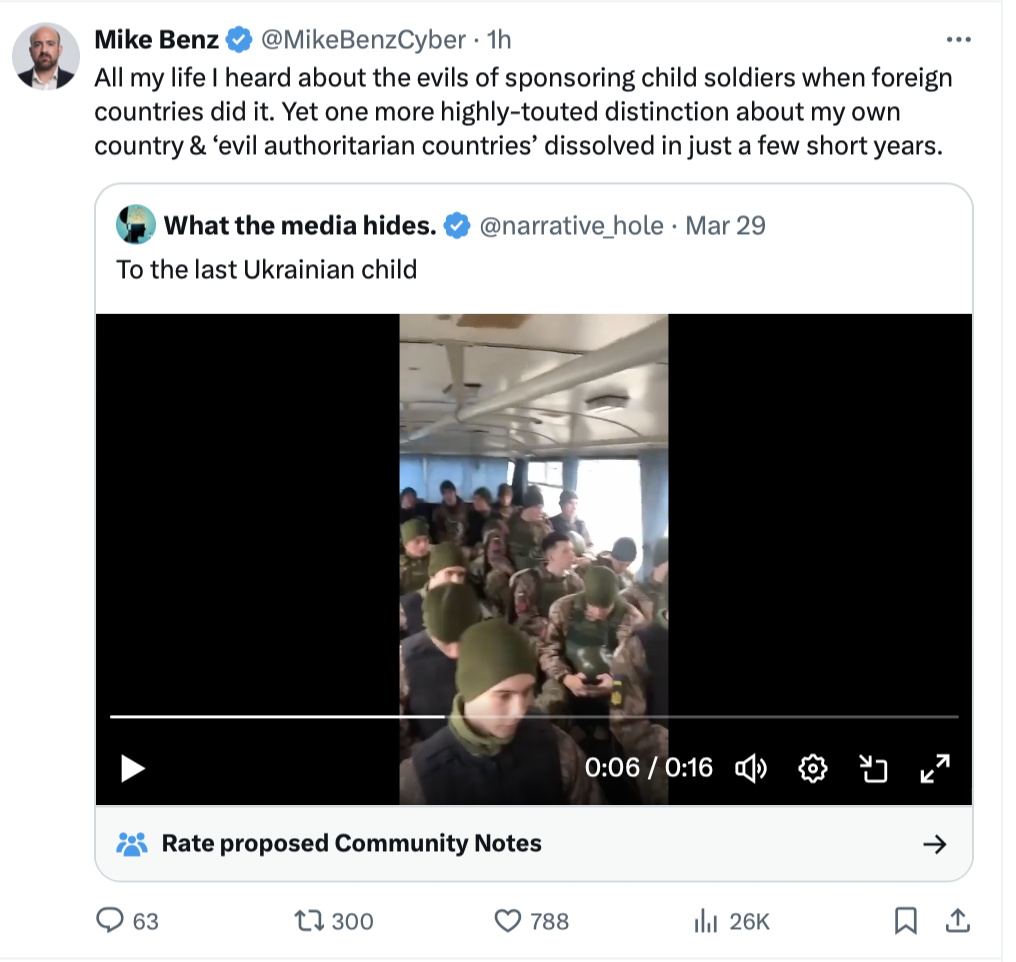

Ukrainian Children Shoved Into the Meat-Grinder

Here's something you will never see on the U.S. corporate media outlets that cheer-leaded the U.S. into supporting this miserable carnage.

All of this could have been avoided, but Joe Biden and his crew of neocons who supported the Iraq debacle (Victoria Nuland and Anthony Blinken) crave endless war (and see here).